ARTIKEL POPULAR

Recently, Google introduced a memory compression algorithm called TurboQuant, claiming it can reduce memory usage for large language models by at least six times without sacrificing accuracy, and achieve up to eightfold performance improvements in some tests. This innovation directly targets one of the key bottlenecks in AI computing — the heavy memory burden caused by high-dimensional vectors. By improving vector quantization techniques, the algorithm compresses data size while preserving computational precision.

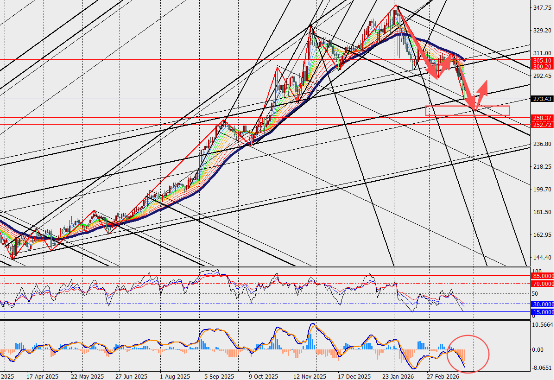

Following this announcement, shares across the global memory and storage supply chain came under pressure. Companies such as Micron Technology, Western Digital, and SanDisk saw declines, as investors worried that reduced memory requirements per unit of compute could weaken long-term demand for memory hardware.

This reaction is not isolated. Over the past year, generative AI demand has driven sustained increases in memory chip prices, even leading to temporary supply shortages. In this context, any technological breakthrough that improves efficiency and reduces hardware requirements tends to trigger market concerns about a potential inflection point in demand.

From a technical perspective, TurboQuant represents an iterative advancement of traditional vector quantization methods. Its core innovation lies in a two-stage compression mechanism that reduces data dimensionality while eliminating quantization errors, achieving both high compression rates and zero loss in accuracy.

This improvement means that under the same hardware conditions, AI models can process longer contexts or larger workloads — effectively increasing output per unit of compute rather than replacing hardware itself. As a result, the market is now divided on its implications.

Some investors worry that if memory requirements per task decline, overall hardware demand could shrink. However, mainstream investment banks and analysts argue the opposite, citing the Jevons Paradox — the idea that improvements in efficiency often lead to greater overall resource consumption, as lower costs stimulate broader adoption.

In the AI context, lower computing costs reduce deployment barriers and expand use cases, ultimately driving higher total demand for both computing and storage.

There are precedents supporting this view. Earlier concerns that low-cost large language model solutions from China would reduce demand for high-end computing power proved unfounded, as overall industry demand accelerated instead — demonstrating the positive feedback loop between efficiency gains and demand growth.

Market Interpretation:

From a fundamental perspective, the core issue in the memory industry remains on the supply side. Continued expansion of AI data centers and rising capital expenditure from cloud providers are keeping demand for DRAM and NAND at elevated levels, while supply constraints persist.

As a result, most institutions believe that TurboQuant is unlikely to materially alter the supply-demand balance in the short term. Its impact is more likely to reshape the efficiency curve over the medium to long term, rather than immediately reducing shipment volumes.