บทความยอดนิยม

Cisco’s new Silicon One G300 is capable of powering gigawatt-scale AI clusters to support training, inference, and real-time agent workloads. It is designed to maximize GPU utilization and improve task completion times by twenty-eight percent.

The Cisco Silicon One G300 chip will power the new Cisco N9000 and Cisco 8000 systems. These systems are designed for hyperscale cloud providers, emerging cloud operators, sovereign clouds, service providers, and enterprise customers. The company said the Silicon One G300 chip, systems based on the chip, and related optical components will begin shipping this year. Cisco emphasized that the new systems feature a one-hundred-percent liquid-cooling design, combined with new optical technologies, which can help customers improve energy efficiency by nearly seventy percent.

In addition, Cisco enhanced its data center network architecture known as Nexus One, aiming to make it easier for enterprises to operate AI networks either on-premises or in the cloud. As AI training and inference continue to scale, data movement has become critical to efficient AI computing; the network itself has effectively become part of the compute layer. This is not just about faster GPUs—the network must provide scalable bandwidth and reliable, congestion-free data transmission.

Powered by the Cisco Silicon One G300, the new Cisco N9000 and 8000 systems deliver a high-performance, programmable, and deterministic networking experience, enabling customers to fully utilize their compute resources and scale AI securely and reliably in production environments.

Last month, Nvidia introduced its next-generation AI computing platform, Vera Rubin, which includes several key networking and infrastructure components. In June 2025, Broadcom began shipping its Tomahawk 6 series switch chips.

Meanwhile, Cisco also announced a series of features to help enterprises securely adopt AI technologies while maintaining agent integrity and control over agent interactions.

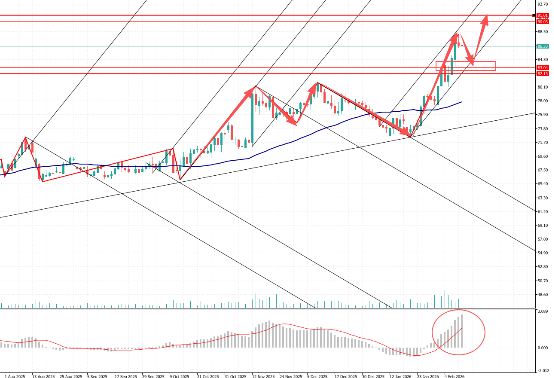

Market Interpretation:

These features include an AI Bill of Materials, providing centralized visibility and governance for AI software assets—including model context protocol servers and third-party dependencies—to safeguard AI supply chain security. The MCP directory helps discover, inventory, and manage risks across MCP servers and registries spanning public and private platforms, strengthening AI governance. Advanced algorithm testing expands the scope of AI security assessments. Real-time agent guardrails help protect both AI agents and applications.